There are a certain class of differential equations which the four numerical solvers we have looked at (Euler, Heun, RK4 and RKF45) are numerically unstable. Unfortunately, this class of problems is difficult to define, though they appear quite regularly within the field of electrical engineering. This topic is mostly here to make you aware of such problems and to help you possibly recognize such situations. We will look at one solver in particular — a modification of Euler's method — to solve such problems.

I'd like to thank Allan Wittkopf for his suggestions and help.

Background

Useful background for this topic includes:

- 14.1 Euler's Method

References

- Bradie, Section 7.9, Absolute Stability and Stiff Equations, p.635.

- Weisstein, http://mathworld.wolfram.com/EulerBackwardMethod.html.

Theory

Consider the IVP

y(0) = 0

The solution to this IVP is the function

A plot of this solution is shown in Figure 1.

Figure 1. The solution to the given initial value problem.

If we use Euler's method to approximate a solution to this IVP using a step size of h = 0.1 for a few steps, we get the following values:

y(0.2) ≈ y2 = -0.0195162582

y(0.3) ≈ y3 = 0.1033409593

y(0.4) ≈ y4 = -0.0395932331

We immediately note a mistake with y2 which is negative, and rather than converging on the solution, the subsequent approximations appear to diverge. Figure 2 shows the solution and the first twenty iterations using Euler's method.

Figure 2. The first 20 approximations using Euler's method.

To demonstrate this more clearly, we can draw in the slopes followed by Euler's method at each step (drawing in the slopes), as is demonstrated in Figure 3.

Figure 3. Euler's method approximations together with slopes.

To understand this better, Figure 4 focuses on the solution and the directional field in the close vicinity of the solution.

Figure 4. The direction field plot for the given differential equation with the solution for the initial value y(0) = 0.

We note that even close to the solution, the slope of the direction field are very large and positive below the solution and negative above the solution. Such a differential equation is termed stiff. One method of solving such problems is to use a smaller value of h, however this may be unnecessarily expensive.

A better technique (rather than making h smaller) we will look at is a modification of Euler's method which is called backward-Euler's method.

Note: this problem was specifically tailored to fail for Euler's method with a step size of h = 0.1. While better techniques like 4th-order Runge Kutta will solve this problem satisfactorily, the problem can be made more stiff by substituting -21 with a larger negative number.

Backward-Euler's Method

For Euler's method, we approximate y(t1) with

where h = t1 − t0.

Instead, evaluate the differential equation at t = t1:

Replace the left-hand side with the backward divided-difference formula:

Next, replace y(ti) ≈ yi:

This gives us an implicit equation for y1:

We can now solve for y1 using any of our standard root-finding techniques. For example, substitute y1 = υ and rewrite the equation as

and let υ0 = y0 and υ1 = y0 + h f(t0, y0), that is, y(t0) and Euler's approximation to y(t1), respectively. We can now use the secant method to find solve for υ and we let y1 be this approximation.

We can then repeat this process arbitrarily often to approximate y2, y3, etc.

To demonstrate this in our example, we will work out two steps:

Finding y1

Given t0 = 0, t1 = 0.1, and y0 = 0, if we substitute these into:

we get the (rather simple) equation

In this case, we can trivially solve for υ = 0.02918830381, and therefore y1 = 0.02918830381.

Finding y2

To find y2, we use the equation

and substitute t2 = 0.2 and y1 = 0.02918830381 to get:

Again, this may be trivially solved for υ = 0.03582625132, and therefore we set y2 = 0.03582625132.

Further Solutions

If we continue this process, we get the approximations shown in Figure 5.

Figure 5. Approximations using the backward-Euler's method.

Higher-order Backward-Euler Methods

We have used the first-order backward divided-difference formula. There is no reason we could not have substituted the second-order backward divided-difference such as:

which, given two initial values y0 and y1, we could approximate y2, and then using y1 and y2, we could approximate y3, and so on.

Why does this work?

The critical question which you are probably asking is: why does this technique work when Euler's method does not? The following is a heuristic: consider the plot in Figure 6 which is a zoom of the solution shown in Figure 4.

Figure 6. A zoom of Figure 4.

Around the solution, all the direction arrows point in approximately the same direction of the solution, while further away, they tend to be more vertical.

If we apply Euler's method, we are following the slope, for example, in Figure 7 we follow the slope at the point (0.1, y(0.1)). This very quickly leads off into regions where the slope is large and negative, and therefore the next approximation with Euler's method will not point anywhere near the actual solution.

Figure 7. The tangent line at the point (0.1, y(0.1)).

If we apply Euler's method at (0.1, y(0.1)) for various values of h, Figure 8 shows the next iteration. Very quickly, the second iteration begins to diverge very quickly from the actual solution.

Figure 8. Two steps of Euler's method for values of h between 0.001 and 0.15.

Alternatively, the backward-Euler's method works as follows: going forward a distance h, what is the eligible point which could have had (0.1, y(0.1)) as a point on the solution? Figure 9 chooses a number of points around 0.2 and the lines passing through those points.

Figure 9. 21 various (0.2, y1) values and the backward paths.

Notice how only one of the points could have had (0.1, y(0.1)) as its predecessor. That point is approximately (0.2, 0.39) (the actual value being (0.2, 0.03902971461)). Figure 10 shows both Euler's method and the backward-Euler's method with h = 0.1.

Figure 10. Euler's and the backward-Euler's method at (0.1, y(0.1)) with h = 0.1.

The second-order backward-Euler's method uses a second-order backward divided difference approximation of the derivative. Given (0, 0) and (1, y(1)), Figure 11 shows the approximation of y(0.2) using this technique. In this case, the 2nd-order technique is not as good as the 1st-order technique demonstrated in Figure 10, however, for smaller values of h it becomes more accurate.

Figure 11. The second-order backward-Euler's method using (0, 0) and (0.1, y(0.1)).

HOWTO

Problem

Given the IVP

y(t0) = y0

approximate y(t1).

Assumptions

The function f(t, y) should be continuous in both variables.

Tools

We will use the backward divided-difference formula.

Initial Conditions

Set h = t1 − t0. Let y1 be the approximation of y(t1).

Process

Solve the equation

for &upsilon and set y1 = υ.

Examples

The following are not stiff differential equations, however, the techniques may still be applied.

Example 1

Given the IVP y(1)(t) = 1 - t y(t) with y(0) = 1, approximate y(1) with one step.

First, let t0 = 0, y0 = 1, and h = 1. Thus, we write down the equation

and, after substituting the appropriate values, we get

Solving this equation yields υ = 1, and therefore we set y1 = 1. The absolute error is 0.33.

Example 2

Given the same IVP shown in Example 1, approximate y(0.5).

First, let t0 = 0, t1 = 0.5, y0 = 1, and h = 0.5. Thus, we write down the equation

and, after substituting the appropriate values, we get

Solving this equation yields υ = 1.2, and therefore we set y1 = 1.2. The absolute error is 0.14 which is approximately the absolute error in Example 1.

Example 3

Repeat Examples 1 and 2 but with with the initial value y(0.5) = 2.5 and approximating y(1.5) and y(1.0).

To find y(1.5), let t0 = 0.0, t1 = 1.5, y0 = 2.5, and h = 1. Thus, the equation

Solving this equation yields υ = 1.4, and therefore we set y1 = 1.4. The actual value is y(1.5) = 1.502483616, and therefore the absolute error is 0.102.

To find y(1.0), let t0 = 0.0, t1 = 1.0, y0 = 2.5, and h = 0.5. Thus, the equation is

Solving this equation yields υ = 2, and therefore we set y1 = 2. The actual value is y(1) = 2.126611964 and therefore the absolute error is 0.127.

This absolute error is larger than it was when h = 1.0, and thus, to show that the error is O(h2), we must use smaller values of h. These are shown in Table 1.

Table 1. Errors when approximating y(t0 + h) for decreasing values of h.

| h | Approximation of y(0.5 + h) | Error |

|---|---|---|

| 1. | 1.4 | 0.102 |

| 0.5 | 2. | 0.127 |

| 0.25 | 2.315789474 | 0.0528 |

| 0.125 | 2.434782609 | 0.0160 |

| 0.0625 | 2.475471698 | 0.00434 |

| 0.03125 | 2.489913546 | 0.00112 |

| 0.015625 | 2.495519495 | 0.000285 |

| 0.0078125 | 2.497902608 | 0.0000719 |

| 0.00390625 | 2.498987283 | 0.0000181 |

| 0.001953125 | 2.499502670 | 0.00000452 |

| 0.0009765625 | 2.499753595 | 0.00000113 |

The quadratic behaviour becomes obvious with the last step, being smaller by almost exactly 4.

Engineering

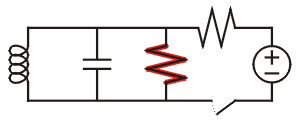

The circuit

Figure 1. Negative resistance oscillatory circuit.

where the highlighted resistor has negative resistance (e.g., a tunnel diode) may be described by a differential equation of the form

This second-order differential equation exhibits stiff behaviour for large positive values of α. When α = 0, it is a simple oscillatory circuit and harmonic motion.

Reference: Van der Pol Oscillators

Error

To derive the error, again, we simply use Taylor series, but in an appropriate form. Instead of approximating for y(t + h) given y(t), we approximate for y(t) given y(t + h):

Note that the second term is negative because t = (t + h) − h.

From the differential equation, we may substitute y(1)(t) = f(t, y(t)):

If we substitute y(t) = y0, y(t + y) = y1, and t + h = t1 into this equation, we get:

If we remove the h2 term, we get the equation which we are solving for the backward-Euler's method. With further manipulation, it can be argued that this implies that the error also drops with respect to h2.

Questions

The following are not stiff differential equations, however, the techniques may still be applied.

Question 1

Given the IVP

y(0) = 1

approximate y(0.5), y(1), and y(1.5) using the backward-Euler's method.

Answer: 1.377777778, 1.76, 2.145454545.

Question 2

Given the same ODE as in Question 1, but with the initial condition y(1) = 2, approximate y(1.5) and y(2.0).

Answer: 2.355555556 and 2.72

Matlab

To be completed.

Maple

Given the IVP y(1)(t = -21 y(t) + e-t y(0) = 0, to approximate y(t) on [0, 2] with h = 0.1, we can do:

N := 20:

t[0] := 0:

h := 0.1:

f := (t, y) -> -21*y + exp(-t):

for i from 1 to N do

t[i] := t[0] + i*h;

end do:

y[0] := 0:

for i from 1 to N do

y[i] := fsolve( upsilon - y[i - 1] = h*f( t[i], upsilon ) = 0 );

end do:

plots[pointplot]( [seq( [t[i], y[i]], i = 0..N )] );

Here we let Maple's fsolve routine fine the root of our equation.

Copyright ©2005 by Douglas Wilhelm Harder. All rights reserved.