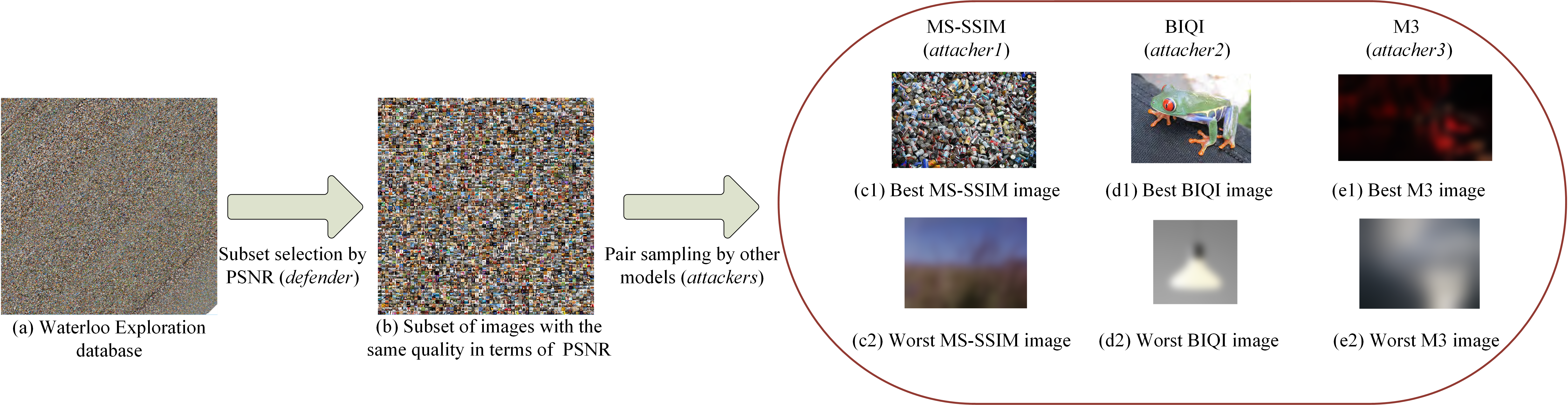

Objective image quality assessment (IQA) models aim to automatically predict human visual perception of image quality and are of fundamental importance in the field of image processing and computer vision. With an increasing number of IQA models proposed, how to fairly compare their performance becomes a major challenge due to the enormous size of image space and the limited resource for subjective testing. The standard approach in literature is to compute several correlation metrics between subjective mean opinion scores (MOSs) and objective model predictions on several well-known subject-rated databases that contain distorted images generated from a few dozens of source images, which however provide an extremely limited representation of real-world images. Moreover, most IQA models developed on these databases often involve machine learning and/or manual parameter tuning steps to boost their performance, and thus their generalization capabilities are questionable. Here we propose a novel methodology to compare IQA models. We first build a database that contains 4,744 source natural images, together with 94,880 distorted images created from them. We then propose a new mechanism, namely group MAximum Differentiation (gMAD) competition, which automatically selects subsets of image pairs from the database that provide the strongest test to let the IQA models compete with each other. Subjective testing on the selected subsets reveals the relative performance of the IQA models and provides useful insights on potential ways to improve them. We report the gMAD competition results between 16 well-known IQA models, but the framework is extendable, allowing future IQA models to be added into the competition.

@inproceeding{ma2016gmad,

author = {Ma Kede, Wu Qingbo, Wang Zhou, Duanmu Zhengfang, Yong Hongwei, Li Hongliang and Zhang Lei},

title = {Group MAD Competition - A New Methodology to Compare Objective Image Quality Models},

booktitle = {IEEE Conferene on Computer Vision and Pattern Recognition},

year = {2016}

}

Instruction:

- The gMAD toolbox has a dependency upon the LIVE Image Quality Assessment database, which can be downloaded from Here.

- Download the Waterloo Exploration database and the gMAD toolbox.

- Put your algorithm in the root folder of the toolbox, and execute the initialization script.

- After the initialization step, you can find all generated image pairs in the ./data/test_image folder, from which you may gain a first impression on how the models compete with each other.

- Execute run_subjective_test script to start the subjective testing.

- Go to the ./support_functions/cvx folder and execute the cvx_setup script. Then execute data_analysis script to obtain global ranking results.

Run demo to see a sample program.

| Algorithm | Reference |

|---|---|

| PSNR | Peak signal to noise ratio |

| SSIM | Wang et al. Image quality assessment: from error visibility to structural similarity. TIP. 2004. |

| MS-SSIM | Wang et al. Multi-scale structural similarity for image quality assessment. Asilomar. 2003. |

| FSIM | Zhang et al. A feature similarity index for image quality assessment. TIP. 2011. |

| BIQI | Moorthy et al. A two-step framework for constructing blind image quality indices. SPL. 2010. |

| BLINDS-II | Saad et al. Blind image quality assessment: a natural scene statistics approach in the DCT domain. TIP. 2012. |

| BRISQUE | Mittal et al. No-reference image quality assessment in the spatial domain. TIP. 2012. |

| CORNIA | Ye et al. Unsupervised feature learning framework for no-reference image quality assessment. CVPR. 2012. |

| DIIVINE | Moorthy et al. Blind image quality assessment: from scene statistics to perceptual quality. TIP. 2011. |

| IL-NIQE | Zhang et al. A feature-enriched completely blind image quality evaluator. TIP. 2015. |

| LPSI | Wu et al. A highly efficient method for blind image quality assessment. ICIP. 2015. |

| M3 | Xue et al. Blind image quality assessment using joint statistics of gradient magnitude and Laplacian features. TIP. 2014. |

| NFERM | Gu et al. Using free energy principle for blind image quality assessment. TMM. 2015. |

| NIQE | Mittal et al. Making a completely blind image quality analyzer. SPL. 2013. |

| QAC | Xue et al. Learning without human scores for blind image quality assessment. CVPR. 2013. |

| TCLT | Wu et al. Blind image quality assessment based on multichannel features fusion and label transfer. TCSVT. 2016. |